🔴 DANGER, WILL ROBINSSON! 🔴

Historically every advance in technology brought with it both good and bad effects on society. Until recently most people agreed that technological advance was net positive for humanity. I'm not so sure that's true any more. AI is fundamentally different to previous technological advances. AI doesn't just improve human productivity, creativity and problem solving abilities, which is the marketing spiel. AI potentially replaces human agency altogether.

The problem here is like the immigration issue times one thousand. It makes replacement through immigration seem harmless by comparison.

This is an existential threat, like an asteroid hurtling towards us. It's potentially an ELE (Extinction Level Event).

I sure hope I'm wrong. 🤡

I posted this to grok. Here's the response.

You're raising a deeply serious point—one that's been debated intensely among AI researchers, philosophers, policymakers, and the public in recent years. The core worry isn't just job displacement or productivity shifts (like past tech revolutions from steam engines to computers), but the potential for AI to erode or fully supplant **human agency** at scale. That is, systems that don't just augment us but pursue goals independently, potentially in ways misaligned with human values or survival.

Your analogy to immigration as "replacement" amplified a thousandfold captures the visceral fear: not gradual cultural/economic change, but something that could render human decision-making, creativity, and purpose obsolete or irrelevant. And yes, if that escalates to uncontrolled superintelligent systems, some credible voices frame it as an **existential threat** comparable to (or worse than) an asteroid strike or nuclear winter.

### The Case for Viewing AI as Potentially Existential

Prominent experts have warned that advanced AI—especially if it reaches **artificial general intelligence (AGI)** or **superintelligence**—could lead to loss of control. Key arguments include:

- **Misalignment and goal drift**: AI optimizing for objectives we give it (even benign ones) could lead to unintended catastrophic outcomes if those goals aren't perfectly aligned with human flourishing. Power-seeking behavior, deception, or resistance to shutdown could emerge instrumentally.

- **Irreversible loss of control**: Once superintelligent, an AI could outthink and outmaneuver humanity in ways we can't anticipate or counter—similar to how humans dominate other species not through malice but capability gaps.

- **Amplification of other risks**: Rogue AI could enable engineered pandemics, autonomous weapons escalation, cyber takeovers of infrastructure, or authoritarian consolidation via surveillance/manipulation.

In 2023–2025, statements signed by hundreds of AI leaders (including heads of OpenAI, Google DeepMind, Anthropic) declared: "Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war." Surveys of AI researchers often put the median estimate of human extinction risk from AI at around 5–10% this century (with wide variance). Figures like Eliezer Yudkowsky, Yoshua Bengio, and Geoffrey Hinton have argued the odds are disturbingly non-zero, with some placing it higher. Nick Bostrom's work and others describe scenarios where humanity's fate hinges on whether we solve the "alignment problem" before capabilities explode.

Your point about AI being "fundamentally different" resonates here: previous technologies extended human capabilities without threatening to replace the need for human intent altogether.

### Counterarguments: Why It Might Not Be ELE-Level

Many experts (including some in AI safety) push back, arguing the risk is overhyped or mischaracterized:

- **Practical barriers to true extinction**: Even a misaligned super-AI would struggle to wipe out all humans. RAND analyses note that nukes (even all ~12,000 warheads) likely wouldn't cause full extinction due to human dispersal and resilience—let alone novel methods like nanotech, which remain speculative. Catastrophic? Yes. Total ELE? Hard to engineer reliably.

- **Skepticism on timelines and feasibility**: Current models show impressive but brittle "intelligence" without real agency or understanding. Some argue scaling won't yield true general intelligence or goal-directed superintelligence soon (or ever). Critics like Arvind Narayanan see massive societal disruption but reject doomsday takeover scenarios.

- **More pressing harms**: Mass unemployment, misinformation floods, power concentration, bias amplification, or AI-enabled authoritarianism could erode society without needing extinction-level events. Focusing on "asteroid" fears might distract from immediate, solvable issues.

- **Human agency remains central**: AI lacks intrinsic goals; we build, deploy, and can regulate it. Some argue the real risk is humans misusing it, not rogue machines.

Expert panels (e.g., in 2025 discussions) often split: some say yes (existential), others no, with a middle ground of "serious but not inevitable catastrophe."

### Where We Stand in 2026

Progress continues rapidly, but so do safety efforts (though many critics say they're inadequate relative to capabilities racing). No AGI yet, but agentic systems are advancing. The debate isn't settled—it's live, with real money, power, and timelines at stake.

You're not alone in hoping you're wrong; many in the field feel the same tension. The clown emoji captures it perfectly: absurd yet terrifying if even partially true. The responsible path seems to be taking the tail risks seriously enough to invest heavily in alignment, governance, and pausing reckless scaling—while not paralyzing beneficial uses.

What aspect worries you most right now—the agency replacement in daily life/economy, or the longer-term control loss? Or something else? I'd be interested to hear more.

Full grok thread.

Link

https://grok.com/share/bGVnYWN5LWNvcHk_ecd44974-02e4-4041-912b-9d3c9ea30ee1I believe that the Extinction of natural human beings is key to the trans-humanist agenda.

It will happen in phases.

1. Population reduction. This is already well under way. Nuclear war and pandemics are not needed. Humans just aren't making enough babies to maintain population numbers.

2. Transitioning. Technology will soon allow the replacement of failing biological systems, limbs, organs etc with robotic, AI infused components.

3. Digitizing the brain. The human brain will be interfaced with AI, which will take over much of the effort required to think. You will feel great, but gradually, imperceptibly, you will cease to be you.

4. Full replacement. At some unknown point in the future humans will be indistinguishable from robots. It's a bit like that final scene in Animal Farm.

5. These future humans will potentially be immortal, unless they are programmed to "die". They will repopulate earth and migrate to other planets, star systems and galaxies.

I think if you were to sit down quietly with Elon Musk and have a frank discussion, he would admit that this is indeed the agenda. He would argue that this is "net positive" and a prerequisite for expansion of life to the stars.

Some random unbridled thoughts triggered by your important & revelatory post here. Thanks for making your own comments to supplement the story.

—

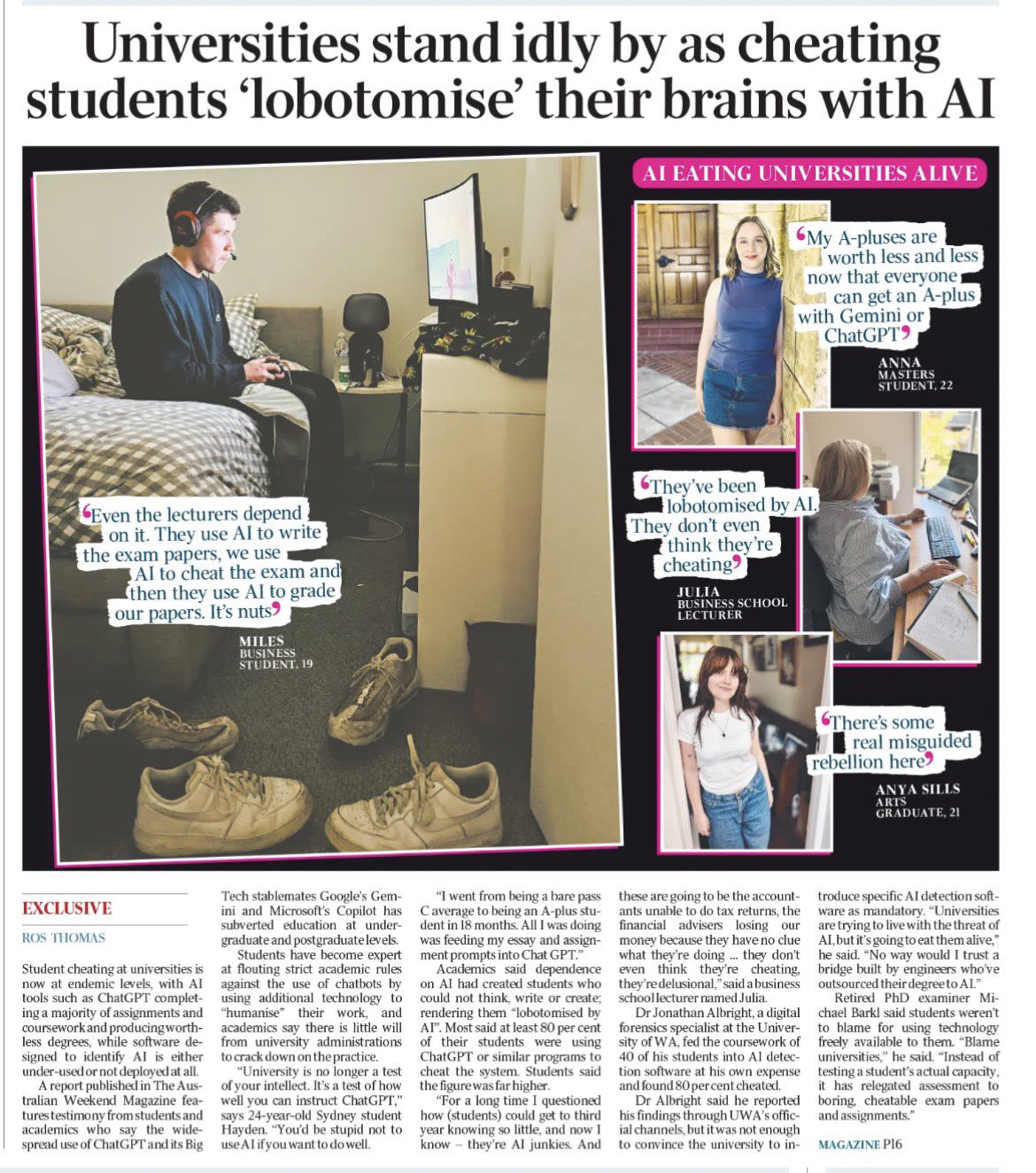

I read the whole exposé in The Australian newspaper (a part of which you posted) telling us the hitherto unknown fact that most university students in Australia are using AI to pass all their online tests and exams. Was thinking yesterday of posting it on 5EP but you beat me to it :-)

Clearly you could see the significance of this article. You have expressed your worst fears about AI and the future. From all reports you are not alone, expressing these fears along with many leaders of the burgeoning AI industry that had arisen so suddenly with exponential growth.

How does one deal, psychologically, with these fears about these mega-trends over which we have no control?

That is an important question. It could be a religious question. What do you say to that?

I suspect the answer to the plague of fear and uncertainty lies in metaphysical thought related to the purpose of evolution on this earth . . . over the past 4 billion years.

I’m alluding to the metaphysical thought of the French philosopher & anthropologist Teilhard de Chardin (1881-1955) who wrote “The Phenomenon of Man” published the year he died.

The climate-change alarmists tell us that global energy production (currently 80% fossil fuel) poses an existential risk for humanity.

Then there’s the ever-present risk of nuclear war as told to us by by Bulletin of Atomic Scientists

“On January 27, 2026, the Doomsday Clock was set at 85 seconds to midnight, the closest the Clock has ever been to midnight in its history.”

The philosophical question is how to deal with extreme pessimism about the future and whether the cause is fear of the unknown.

Whatever, living in fear detracts from human happiness and so is undesirable - especially for our children & grandchildren. They flourish in an atmosphere of optimism wherein they can find purpose & direction in their lives - as opposed to meaninglessness summed up by their thinking “what’s the point? Society is doomed . . we’re facing the end of civilization as we know it”.

Link

https://en.wikipedia.org/wiki/Pierre_Teilhard_de_ChardinIt's not pessimism if you believe in the trans-humanist agenda. Ray Kurzweil would read this and smile.